Professor Yousun Kang, Department of Engineering, Faculty of Engineering

This study presents an innovative framework for Point-supervised Temporal Action Localization with a particular focus on fine-grained actions, including Japanese fingerspelling and subtle gestures.

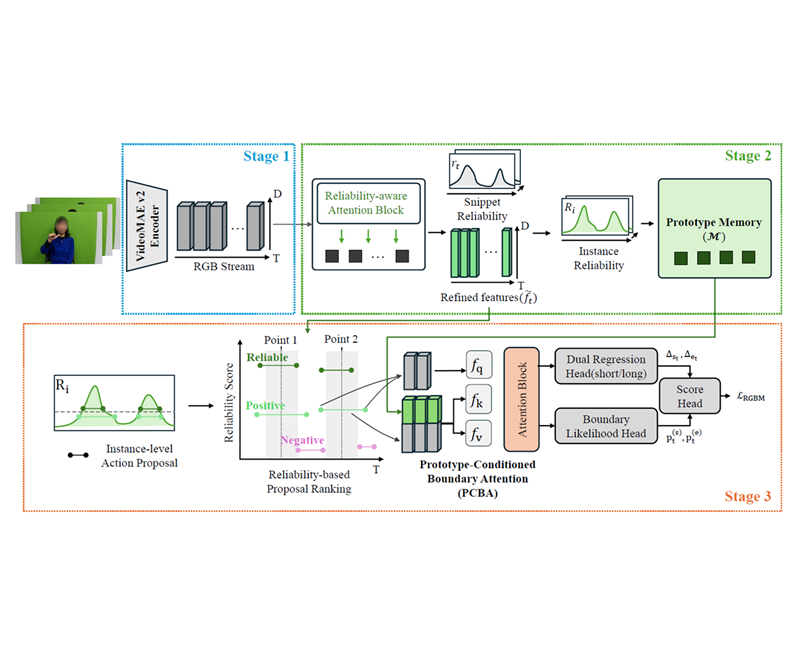

The proposed framework, termed ReGBoM, reconceptualizes conventional reliability-propagation-based methods from passive inference to active, boundary-oriented reasoning.

The ultimate objective is to significantly enhance the detection accuracy of fine-grained action boundaries under sparse point-level supervision.

【Background and Objective】

Existing point-supervised learning methods have largely emphasized capturing the global structure of actions. However, for fine-grained actions such as fingerspelling, where motion transitions are rapid and highly subtle, ambiguity in temporal boundaries often leads to fragmented predictions and over-extended localization results. To overcome these limitations, this study introduces a novel approach that leverages reliability information as an active signal for temporal boundary formation.

【Proposed Method】

The proposed method, ReGBoM, consists of three principal components. First, PCBA Attention aligns boundary queries by anchoring them to highly reliable prototypes. Second, a Dual Regression Structure achieves stable temporal extent estimation by integrating short- and long-term temporal dependencies. Third, Angle Refinement is introduced to capture delicate transitional patterns in fine-grained motion. Together, these components enable accurate temporal boundary estimation even from sparse point annotations.

【Expected Outcomes and Significance】

Experimental results on the ub-MOJI[1] Japanese fingerspelling dataset demonstrate that the proposed method improves mAP by 9.0% over prior approaches at the challenging tIoU threshold of 0.7. In addition, its generalizability has been confirmed on standard benchmarks such as GTEA[2]. The proposed framework is therefore expected to make meaningful contributions to application domains that require precise motion understanding, including sign language translation and medical rehabilitation support.

Reference

[1] Kondo, T., Murai, R., Tsuta, N. and Kang, Y.: ub-MOJI, https://huggingface.co/datasets/kanglabs/ub-MOJI (2025).

[2] Fathi, A., Ren, X., and Rehg, J. M., “Learning to Recognize Objects in Egocentric Activities,” in Proceedings of the IEEE CVPR, pp. 3281–3288, 2011.